ERP Scalability Problem: When the System That Got You Here Cannot Get You There

You close a major contract the largest in the company’s history. The sales team is celebrating. And then the operations director walks in with a problem: the system cannot handle the volume. Queries that used to run in seconds are timing out. The interface is lagging across every workstation during peak hours. And the prospect of onboarding another 20 users to support the new contract is not an IT project it is an existential question about whether the infrastructure can survive the growth it was built to enable.

This is the Scalability Wall, and it is one of the most strategically damaging conditions a growing enterprise can face because it forces leadership to make a choice that should never exist: throttle growth to protect the system, or push the system until it fails. For organizations that have declined contracts, delayed expansions, or hired additional administrative staff to compensate for a system that cannot scale, the cost is not theoretical. It is the direct dollar value of every opportunity the infrastructure could not support. Phoenix Consultants Group eliminates this constraint by deploying the FireFlight Data System on a performance-tuned, modular architecture engineered to expand with your operational volume so your technology scales with your sales team, not against it.

Why Do Legacy Systems Fail During Expansion?

Most traditional ERPs are built on monolithic architectures a single, unified codebase where every function shares the same processing resources and the same database connections. This design is efficient at the scale it was built for. As transaction volume increases, the number of concurrent database queries grows proportionally, the processing load on shared resources compounds, and the system’s response time degrades. The architecture was designed for a specific workload ceiling. Once the business exceeds that ceiling, the system does not gracefully degrade it slows down exponentially, then fails.

The analogy is structural: trying to scale a monolithic ERP to handle 10x transaction volume is the architectural equivalent of trying to build a skyscraper on a foundation designed for a two-story house. The foundation was not inadequate for its original purpose. It is inadequate for a purpose it was never designed to serve. The correct response is not a better patch or a larger server it is a different foundation. One built with modular, independently scalable components where increased volume in one area does not degrade performance across the entire system, and where capacity can be expanded without a structural rethink.

The Throughput Capacity Matrix: Monolithic vs. Modular Architecture

The following table maps the documented performance trajectory of a monolithic legacy ERP against FireFlight’s modular, performance-tuned architecture across four transaction volume milestones. Performance figures reflect relative system response time and operational reliability not theoretical maximums.

|

Transaction Volume |

Legacy Monolith -Response & Reliability |

Weekly Operational Cost of Degradation |

FireFlight Modular – Response & Reliability |

|

Baseline (Current Volume) |

100% – Acceptable performance |

Minimal |

100% – Optimized baseline |

|

2x Growth |

~65% – Noticeable lag; staff productivity impacted |

8 – 15 hrs lost to workarounds |

100% – Consistent; no reconfiguration needed |

|

5x Growth |

~30% – Frequent timeouts; production disruptions |

20 – 35 hrs lost; emergency IT intervention |

100% – Performance-tuned SQL handles load |

|

10x Growth |

Critical failure – system cannot sustain load |

$10K – $50K+ per downtime incident |

Sustained – modular components scale independently |

The degradation curve on a monolithic architecture is not linear it is exponential. Each doubling of transaction volume imposes a disproportionately larger processing burden on shared resources, which is why the performance drop from 2x to 5x growth is more severe than the drop from baseline to 2x. FireFlight’s modular SQL Server architecture avoids this curve by design: components that handle high-volume transaction types are independently tuned and can be scaled without affecting the performance of adjacent modules.

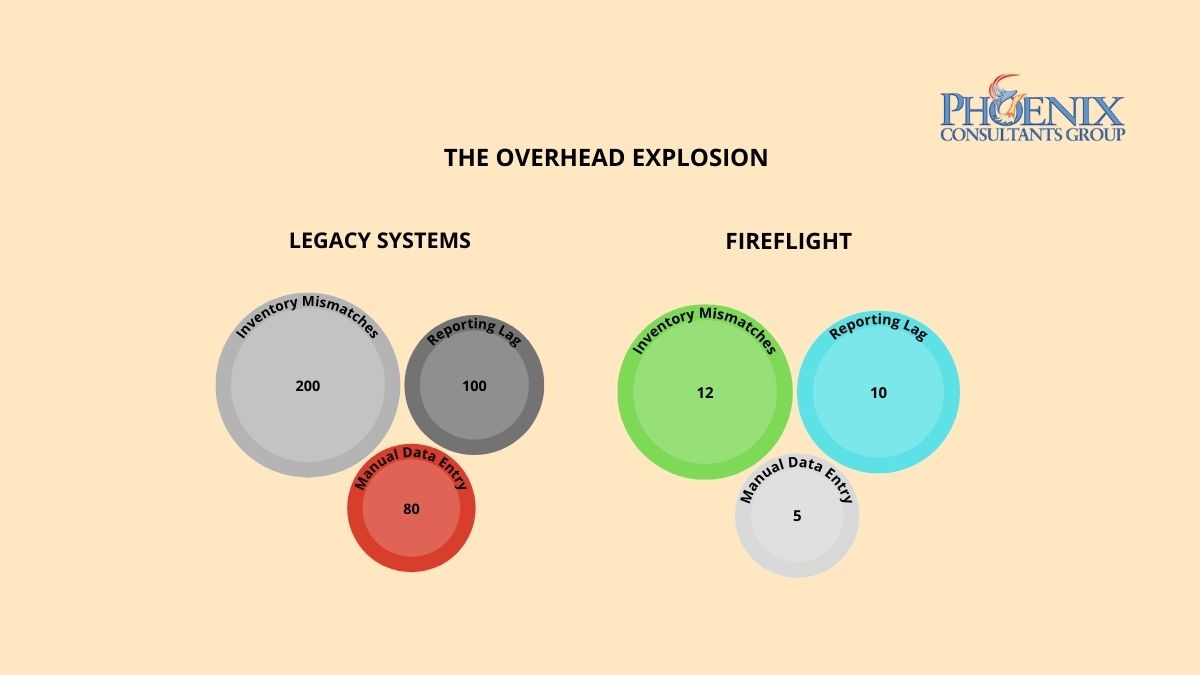

The Strategic Friction Audit: Three Signs You Have Hit the Scalability Wall

These three operational patterns indicate that your current architecture has reached its functional ceiling. Each one represents a category of growth constraint that compounds with time the longer the underlying infrastructure problem goes unaddressed, the more the business adapts to work around it, and the more expensive those adaptations become.

- The Performance Lag: Your staff reports that the system runs noticeably slower during peak hours, at month-end, or during high-order-volume periods. If system performance is time-dependent or volume-dependent, the architecture has a fixed throughput ceiling and your business is already operating near it. The next contract that doubles your order volume will not slow the system further. It will break it.

- The Integration Struggle: Adding a new department, a new production line, or a new operational function requires months of custom development work not because the new function is complex, but because threading it into the existing monolithic architecture without triggering a conflict or a performance regression requires careful, time-consuming manual integration. In a modular architecture, new functions are added as new modules. In a monolithic architecture, every addition is a surgery on a system with no clear separation of concerns.

- The Manual Backup: Your organization has hired additional administrative staff specifically to handle data entry, order processing, or reporting work that the system is too slow or too limited to handle automatically. This is the most financially invisible form of scalability failure: the cost appears as a payroll line item, not as a technology expense. But it is a direct consequence of an infrastructure that cannot scale and it grows proportionally with every new operational demand placed on the same limited system.

Architecture Over Features: Built for Elastic Performance

Generic ERP vendors compete on feature lists and user interface design. They rarely publish performance benchmarks for high-transaction-volume environments because their monolithic architectures do not perform well under those conditions. Phoenix Consultants Group competes on infrastructure: the performance characteristics of the underlying architecture are the product, not the visual design of the dashboard.

FireFlight is built on .NET Core 8 with Razor Pages, backed by a SQL Server architecture that is performance-tuned specifically for high-volume, concurrent transaction environments. The system uses data compression at the database level to reduce storage and retrieval overhead as transaction volumes scale. Query optimization is built into the core architecture not applied as an afterthought when performance problems surface. The hosting environment is configured for low-maintenance overhead and high availability, with role-based access controls that ensure the transaction processing layer is not degraded by unauthorized or inefficient query patterns from individual users.

The modular design of the FireFlight system is the structural mechanism that enables scaling without architectural rethink. Each functional module inventory, scheduling, billing, compliance, project management operates as an independently tunable component that shares the centralized SQL Server database but does not compete for the same processing resources. When a specific module experiences a volume spike, its performance can be tuned independently without touching adjacent modules. New modules are added to the system without modifying the existing architecture. The system grows by extension, not by replacement which is precisely what distinguishes scalable architecture from the monolithic model it replaces.

The Continuity Roadmap: Engineering for the Business You Are Building

Moving from a monolithic architecture to a scalable modular system requires a structured transition that addresses both the current performance ceiling and the future growth trajectory your business intends to pursue. PCG executes this transition in three phases, with measurable performance improvement beginning from the first full operational cycle after deployment.

-

Load Audit and Architecture Assessment: PCG conducts a structured analysis of your current system’s performance profile identifying the specific transaction types, concurrent user loads, and data volumes that are generating the most friction. This audit maps your current throughput ceiling against your projected growth trajectory, quantifying the gap between where your infrastructure performs acceptably and where your business strategy requires it to perform. The output is a prioritized list of the highest-impact architectural constraints and a FireFlight configuration plan designed to address each one.

-

Modular Migration and Performance Tuning: PCG migrates your core business logic to the FireFlight modular system, configuring each module for your specific transaction patterns and volume profile. SQL Server performance tuning is applied at the deployment stage not reactively when problems surface with query optimization, data compression, and connection pooling configured to the specific throughput requirements identified in the load audit. The migration runs in parallel with your live system, so your current operations are not interrupted during the transition. Performance benchmarks are validated against live data before cutover, confirming that FireFlight meets or exceeds the throughput targets agreed during scoping.

-

Growth-Ready Handoff: Once FireFlight is live, your leadership team gains an infrastructure that is configured for the growth trajectory your business is pursuing not the volume it was processing when the old system was installed. New users, new departments, new transaction types, and new operational modules are added to the FireFlight system without requiring a system rebuild or a performance reconfiguration. Your technology investment scales with your revenue rather than constraining it, and your operations team adds capacity the same way your sales team closes deals: one unit at a time, without a structural ceiling.

Evidence of Experience: Engineered for High-Volume Operational Environments

PCG built FireFlight’s performance-tuned architecture because the clients who needed scalable infrastructure most were the ones whose growth was actively being constrained by their existing systems. Allison Woolbert developed the modular scaling methodology after three decades of engineering data system for high-volume environments including high-volume systems for ExxonMobil and Nabisco where transaction throughput and data integrity must be maintained simultaneously under peak operational load.

That same performance standard is applied in PCG’s commercial deployments. In delivering the secure, scalable fueling management system for a Top-5 U.S. metro fleet an environment where thousands of fueling transactions are processed daily across a distributed fleet, each one requiring real-time authorization, inventory deduction, and financial recording, PCG engineered an architecture that maintains consistent sub-second response times under sustained high transaction volume. The system was designed to handle peak fleet operational load from day one, with the modular architecture ensuring that future fleet expansion does not require a system replacement to accommodate the additional transaction volume.

Authority FAQ: Scalability Objections, Answered Directly

What happens to system performance during an unexpected volume spike?

FireFlight’s SQL Server architecture includes query optimization and connection pooling configured specifically to handle volume spikes without proportional performance degradation. Because each module operates independently, a spike in transaction volume for one function a high-order-volume sales period, for instance does not degrade the performance of adjacent modules like reporting or scheduling. PCG’s load audit establishes your anticipated peak volume parameters during the scoping phase and configures the hosting environment to handle those peaks without manual intervention.

Are there real limits to how much FireFlight can scale, or is the claim of ’10x growth’ marketing language?

Every system has architectural limits the honest answer is that FireFlight’s limits are substantially higher than those of the monolithic systems it replaces, and are designed to be extended through module-level tuning rather than full system replacement. The ’10x growth’ benchmark reflects the performance differential between a well-configured FireFlight deployment and a typical monolithic ERP at equivalent transaction volumes not a hard ceiling. For operations that project growth beyond that threshold, PCG conducts a capacity planning conversation during the initial engagement to ensure the architecture is designed for the specific growth trajectory, not a generic estimate.

How does FireFlight maintain data integrity when transaction volume scales rapidly?

Data integrity under high volume is enforced at the architecture level, not the application level. FireFlight’s SQL Server deployment uses transaction-level integrity controls ACID-compliant transactions, row-level locking, and automated conflict resolution that maintain data accuracy regardless of concurrent transaction volume. The real-time field validation layer prevents incorrect data from entering the system before it is committed, which means the integrity of the database does not degrade as transaction frequency increases. Speed and accuracy are not in tension in the FireFlight architecture both are enforced by the database engine simultaneously.

Can we add new operational modules or departments to FireFlight as we grow, without rebuilding the system?

Yes, that is the core architectural advantage of the modular System. Each new module is added to the FireFlight architecture as an independent component that shares the centralized database but does not modify the existing module logic. A new department, a new production line, or a new geographic branch is onboarded by deploying its corresponding FireFlight module and configuring its specific workflow logic, permissions, and reporting interfaces. The existing modules continue operating without interruption. There is no system rebuild, no migration event, and no performance reconfiguration required for the modules already in production.

Will the cost of running FireFlight increase significantly as we scale?

The cost profile of a FireFlight deployment is fundamentally different from a seat-license or transaction-fee model. Because FireFlight is deployed on performance-tuned hosting infrastructure rather than a per-user or per-transaction pricing model, the incremental cost of adding users or increasing transaction volume is the marginal hosting cost of the additional capacity not a proportional increase in licensing fees. For most mid-size operations, the total cost of ownership decreases on a per-transaction basis as volume scales, because the fixed cost of the architecture is distributed across a larger operational footprint.

About the Author

Allison Woolbert: CEO & Senior Systems Architect, Phoenix Consultants Group

Allison brings over 40 years of expertise in database architecture, enterprise system design, and custom software development. She has spent four decades solving the hardest data problems in business working with Fortune 500 corporations, growing mid-size firms, and small businesses across industries ranging from manufacturing and fleet management to healthcare staffing and regulatory compliance. FireFlight Data System is the product of everything she learned: a purpose-built engine designed to eliminate the structural failures she encountered and fixed throughout her career.