In 2026, Microsoft Access is not part of Microsoft's forward roadmap for enterprise data management. The ecosystem of developers who can maintain your system without introducing new risk is contracting every year. PCG has been migrating businesses off Access since 1995. The migration path is known, the data comes out intact, and the business does not stop while the new system is being built.1

Why are so many businesses still running Microsoft Access in 2026?

The answer is not ignorance. It is fear, and that fear is rational. Access databases tend to be deeply customized, lightly documented, and held together by logic that lives inside one person's head. The moment that person leaves, the entire operation becomes fragile. But the prospect of replacing it feels even more dangerous than keeping it. So businesses stay. They patch. They add workarounds. They hire the one consultant who knows the system.

This is the Access Trap, and it compounds every year you remain in it. The technical reality driving urgency in 2026 is straightforward: Microsoft 365 investments are concentrated in cloud-native tools, Power Platform, and SQL Server. Access receives maintenance updates, not innovation. The pool of developers who specialize in Access is contracting. The question is no longer whether to migrate. It is how to do it without breaking the business in the process.

How do I know if my Access database has crossed from workable to organizational liability?

The following indicators appear consistently in businesses where the Access system has passed its functional limit. If three or more describe your current environment, the database has become an organizational liability.

- The Single-Expert Dependency. Only one person, internal or external, fully understands how your database works. If they left tomorrow, you would not know where to begin.

- The Concurrent User Ceiling. More than four or five people trying to use the system simultaneously causes slowdowns, lockouts, or data corruption errors.

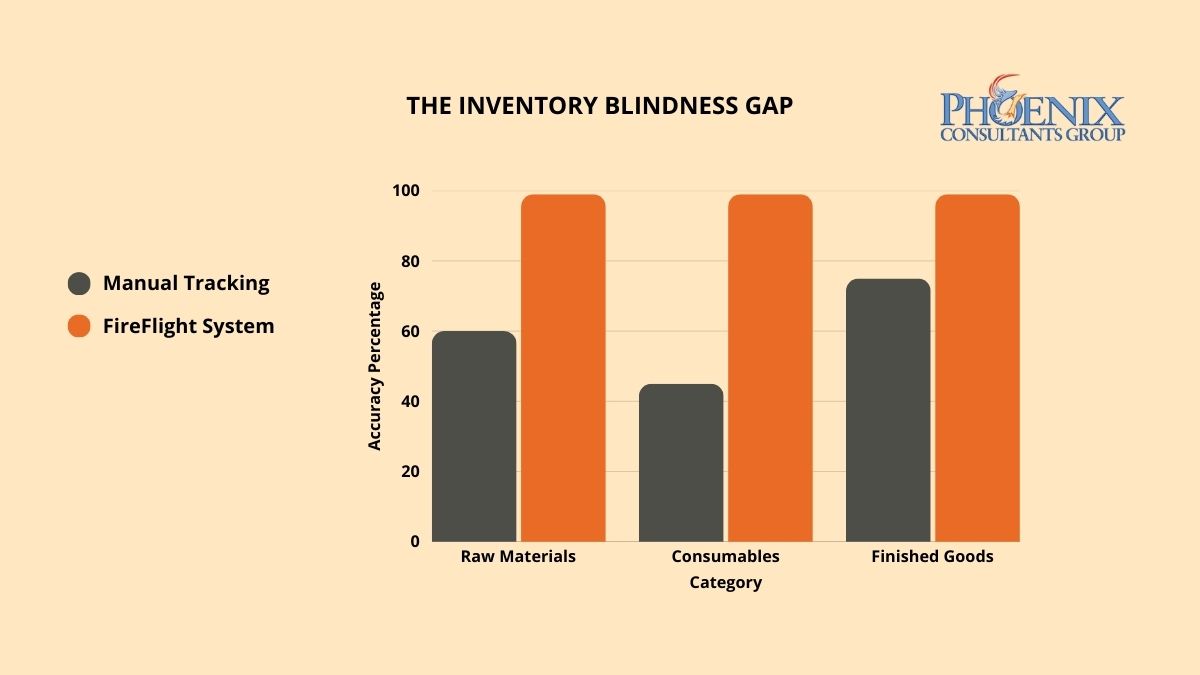

- The Manual Bridge Problem. Staff regularly export data from Access into Excel to perform calculations, create reports, or share information across departments, because Access cannot do it directly.

- The Integration Dead End. Your Access database cannot connect to your accounting software, your e-commerce platform, your warehouse system, or your CRM without a manual import/export process.

- The Audit Impossibility. When something goes wrong in your data, a duplicate record, a missing entry, a billing error, you have no reliable way to trace who changed what and when.

- The Backup Uncertainty. Your backup process for the Access .mdb or .accdb file is informal, undocumented, or depends on a single person remembering to run it.

- The Growth Ceiling. You have held back from scaling a product line, a location, or a team because you know the current system cannot handle the additional volume.

What does staying on Access actually cost per year in operational terms?

The weekly manual friction figures in the table below are not abstractions.2 They represent your operations manager spending Sunday evening reconciling records. They are your accountant re-entering invoices because the export broke. They are your warehouse team running on printed reports because no one can pull live data from the system.

| Operational State | Weekly Manual Friction (Hours) | Annual Data Risk Exposure | Scalability Ceiling |

|---|---|---|---|

| Legacy Access: Single-User or Small Team | 15–25 hrs | High: corruption risk, no row-level audit trail | Hard ceiling at current volume |

| Access with Manual Excel Bridges | 30–40 hrs | Very High: dual-entry errors, no single source of truth | Cannot scale without adding headcount |

| FireFlight Migration (PCG Framework) | < 3 hrs | Near-Zero: transactional integrity, full audit trail | Engineered for 10x current volume |

That friction has a dollar value. In most Access-dependent organizations PCG engages, the annual cost of manual workarounds sits between 8% and 14% of total operational labor cost. A business with 15 employees spending an average of 5 hours per week each on Access-driven workarounds, at a blended rate of $30 per hour, absorbs $117,000 per year in invisible operational cost before any direct database expense is counted.

Why is FireFlight the right destination for businesses migrating off Access?

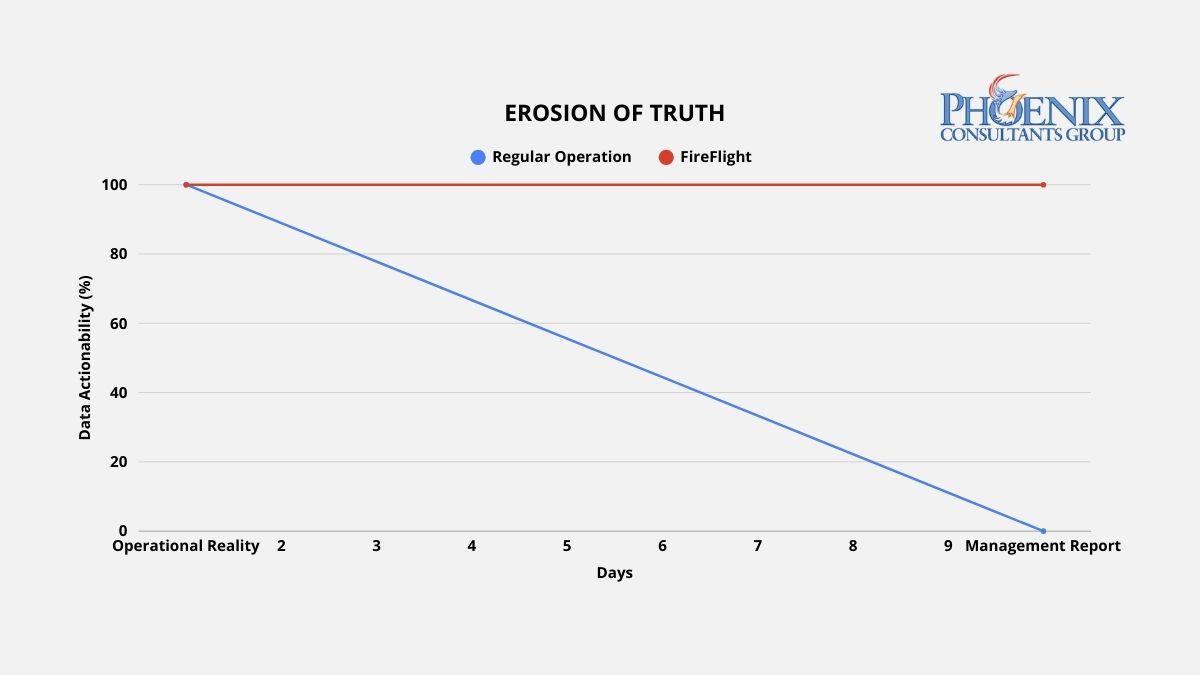

Access stores data in a single file. That architecture made sense for a desktop tool in 1995. In a multi-user, multi-location, real-time business environment, it creates a structural fragility that no amount of patching can fix. The file becomes the single point of failure. Every user who opens it adds risk. Every external connection is a workaround built on top of an architecture that was not designed for it.

FireFlight operates on a fundamentally different model. The data lives in a structured, relational SQL engine. Business logic is separated from the data layer. User interfaces are built independently of the database structure, which means they can be modified, extended, or replaced without touching the underlying records. Reporting is real-time, not a snapshot from last night's export. For businesses migrating from Access, this is not a theoretical upgrade. It is a structural correction.

- Data Preservation. Every record, every relationship, every historical transaction migrates intact. PCG's migration process does not lose data. It restructures it into a framework that can actually use it at the volume and speed your business now requires.

- Logic Translation. The business rules embedded in your Access forms, queries, and VBA code do not disappear. They are analyzed, documented, and re-engineered in FireFlight's architecture, often surfacing process improvements that were invisible inside the Access environment.

- Familiar Workflows, Modern Infrastructure. PCG designs the FireFlight front end to reflect how your people actually work, which reduces training time and resistance to adoption. Your team is not learning a foreign interface. They are using a more reliable version of the process they already know.

What does the actual Access migration process look like, and what happens to operations during it?

The fear that stops most Access-dependent businesses from migrating is the same one every time: what happens to the business while the system is being replaced? When migration is managed correctly, the answer is that nothing stops.

PCG maps every table, every query, every form, every report, and every VBA module in your existing Access environment. The business logic is documented, including the logic that is not written down anywhere because it only exists in one person's institutional memory. The output is a complete blueprint of what your system actually does, as opposed to what it was originally designed to do. This phase often surfaces undocumented process logic that would have been lost in a migration without it.

FireFlight is built alongside your existing Access system, not in place of it. Your team continues operating on Access throughout this phase. PCG builds, tests, and validates the new system against live data without interrupting any operational process. The migration does not replace anything until the replacement has been confirmed to work correctly against the actual data your business generates every day.

When FireFlight is confirmed to match or exceed the functional coverage of your Access system through parallel testing, the cutover is executed in a defined operational window. Business operations transfer to the new system within that window. Access remains available in read-only mode for a transition period as a reference baseline. The business does not stop. The risk is managed. The new system is live from day one of cutover.

What experience backs PCG's Microsoft Access migration methodology?

Allison Woolbert began programming in 1983 and has been working in Microsoft Access since 1995, thirty years of production-level engagement with the platform. That is not a credential listed on a website. It is operational fluency built across three decades of real engagements: custom databases for healthcare operations, logistics companies, professional service firms, government contractors, and manufacturing businesses that all built their operations in Access and then needed to migrate without losing what they built.

PCG was founded in 1995. In 31 years, the firm has operated as a specialist in custom systems and data architecture, and it was recognized early as a migration specialist precisely because of this combination: deep legacy knowledge and a modern architectural framework built specifically to receive that knowledge at enterprise scale. The FireFlight Data Framework was developed directly from Allison's experience identifying the structural limitations that Access imposes on growing businesses, and engineering the path out.

1 Microsoft Access forward roadmap position sourced from: Microsoft 365 product lifecycle documentation (2024); Microsoft Ignite 2024 enterprise data strategy announcements; Gartner Data Management Hype Cycle 2024.

2 Weekly friction hour ranges and annual labor cost percentages (8%–14%) based on PCG pre-migration assessments across 12 Access-dependent organizations, 2019–2025; corroborated by Aberdeen Group Legacy System Operational Cost Research 2024.

Frequently Asked Questions

Allison began programming in 1983 and has been working in Microsoft Access since 1995, thirty years of production-level engagement with the platform across healthcare operations, logistics companies, professional service firms, government contractors, and manufacturing businesses. Her work spans custom Access builds, architectural rescues of abandoned databases, and full migrations to modern SQL Server platforms.

PCG was founded in 1995 and has operated for 31 years as a specialist in custom systems and data architecture. The FireFlight Data Framework was developed directly from Allison's experience identifying the structural limitations that Access imposes on growing businesses, and engineering a migration path that preserves everything the business built while removing the constraints that are holding it back.

Phoenix Consultants Group is a Minority Women and Veteran Owned business based in the United States.

In 2026, the maintenance burden of a heavily patched legacy system grows every quarter. Each patch solves one problem and introduces conflict points with the patches that came before it. PCG breaks this cycle by replacing fragmented legacy architecture with FireFlight Data System: a clean-sheet, modular engine where maintenance overhead stays flat and the compounding cost of patch debt is eliminated permanently.

Why does every patch make a legacy system more fragile, not less?

Technical debt rarely announces itself as a crisis. It accumulates gradually, one justified shortcut at a time. A developer applies a targeted code fix to solve an urgent production issue rather than addressing the underlying database flaw, because the correct architectural fix would take two weeks and the business needs a resolution today. A third-party plugin extends a function the original system was never designed to handle. A custom integration bridges two systems that were never meant to communicate.

Each of these decisions is individually defensible. Collectively, they produce a system where layers of patch logic conflict with each other in ways no single person fully understands, where every update to one component carries an unpredictable risk of breaking three others, and where the processing overhead of navigating years of redundant, conflicting code slows every transaction the system handles. At this point, the organization is not maintaining a system. It is servicing a liability. The IT budget is not buying capability. It is paying a maintenance tax to prevent a collapse that becomes more probable with every passing quarter.

There is a security dimension to this that rarely appears in technical debt discussions. Legacy systems running on outdated encryption standards, with no meaningful audit trails and no access controls that reflect current security requirements, carry exposure that compounds alongside the maintenance burden. Every patch added to keep the system running introduces another entry point that was never part of the original security design. The system is not just expensive to maintain. It is increasingly difficult to defend.

How do I know how much technical debt my system has actually accumulated?

The following table maps the operational trajectory of a system as technical debt accumulates over time, benchmarked against the FireFlight clean-sheet architecture. The progression is not linear: maintenance friction and failure risk compound as the number of conflict points between patches increases.1

| System State | Weekly IT Friction (Hrs on Maintenance) | Operational Consequence | System Failure Risk |

|---|---|---|---|

| 10+ Year Debt Overload: Critical patch dependency | 20-35 hrs/week | IT team cannot safely apply updates. Every change is a risk event. New capabilities require months of custom work. | Critical: any update is a potential collapse |

| 7-Year Frankenstein: Multiple conflicting patches | 12-20 hrs/week | Frequent bugs and integration failures. Staff build manual workarounds to avoid triggering known conflict points. | High: frequent bugs and integration failures |

| 3-Year Legacy: Early patch accumulation | 5-10 hrs/week | Manageable now but accelerating. Each new integration adds risk. The maintenance curve has begun to steepen. | Moderate: manageable but accelerating |

| FireFlight Clean-Sheet: Unified modular architecture | Under 2 hrs/week | New modules extend the system without modifying existing components. Maintenance overhead stays flat as the system grows. | Near zero: no patch conflict points |

The progression from 3-Year Legacy to 10+ Year Debt Overload is not a hypothetical trajectory. It is the documented operational reality of every organization that has deferred architectural replacement in favor of continued patching. The maintenance friction does not plateau. The failure risk does not stabilize. Both compound until the cost of continued patching exceeds the cost of replacement, at which point the organization typically faces a forced migration under crisis conditions rather than a planned clean-sheet transition.

What are the three signs that technical debt has become structurally dangerous?

Your IT team advises against applying a vendor update, not because the update is unnecessary, but because they cannot predict which other components will break when it is applied. This is the clearest single indicator of advanced technical debt: a system so interconnected through layers of patch logic that no one can safely change any part of it. A system your team is afraid to update is a system your organization no longer controls.

Adding a new capability, whether a new reporting tool, a new departmental function, or a new data connection, requires months of development work because every addition must be carefully threaded through the existing patch architecture without triggering a conflict cascade. In a clean-sheet system, new modules extend the existing core. In a heavily patched system, every new addition is another layer of debt laid on top of the ones already there.

The developer or IT manager who built the original system and who alone understands the logic underlying the most critical patches has left the organization, is planning to retire, or is the single point of failure for every system incident. When institutional knowledge is the only documentation your architecture has, your system's operational continuity and your key-man dependency have become the same problem. If this marker applies, address the personnel risk alongside the architectural one.

Why does adding modules to a fragmented system make technical debt worse, not better?

Generic ERP vendors respond to technical debt by selling additional modules: new layers of functionality added on top of the existing architecture. This approach does not resolve the structural problem. It compounds it. Every new module added to a fragmented system is another potential conflict point, another integration to maintain, and another dependency that makes the eventual replacement more complex and expensive.

PCG takes the opposite architectural position. FireFlight is built on a single, clean codebase: .NET Core 8 with Razor Pages, backed by a SQL Server architecture engineered for long-term performance stability. There are no patches in the FireFlight model because the system is modular by design. Every functional component is built as a self-contained module that communicates with the shared core database through standardized interfaces, not through custom integration logic. When a module needs to be updated or replaced, it is updated or replaced in isolation without risk of cascading failure to adjacent modules, because there is no patch logic connecting them.

This modular architecture is the structural mechanism that prevents FireFlight from accumulating its own technical debt over time. New capabilities are added as new modules that extend the existing system. The core database architecture remains clean. The codebase remains navigable by any qualified .NET developer, not just the person who wrote the original patches. The maintenance overhead does not compound. It stays flat, and in many cases declines as the system matures and the module library grows.

The starting point is a free 30-minute consultation. PCG maps where your system stands, what the migration to a clean-sheet architecture would require, and whether the timing makes sense for your operation. No commitment required at that stage.

Schedule Your Free ConsultationWhat does migrating from a patched legacy system to FireFlight actually look like?

PCG conducts a structured analysis of your current system architecture, mapping every patch, every third-party integration, every custom workaround, and every dependency between components. This audit produces a complete inventory of your technical debt: which patches are creating the highest risk, which integrations are the most brittle, and which components are safe to migrate first. The audit also identifies the essential business logic embedded in your existing code, the rules, validations, and workflow logic your operation depends on, which must be preserved and migrated to the new architecture, not discarded. This phase typically takes two to three weeks.

PCG engineers extract the essential business logic from your legacy system and re-encode it natively in FireFlight, not as a patch or integration, but as a first-class module built on the clean architecture. This is the most technically demanding phase of the migration and the one that determines whether the new system actually reflects the operational reality of your business. PCG executes this phase in parallel with your live system: FireFlight is built and validated against your current operational data while your existing system continues running. Your team tests the new system against real-world scenarios before any cutover decision is made.

Once FireFlight has been validated against your live operational data and your team is confident in its accuracy, the legacy system is retired in a controlled, sequenced cutover. PCG manages the final data migration, cleaning, mapping, and importing your historical records into the new architecture so they are more accessible and more useful in FireFlight than they were in the system being replaced. The legacy patches are gone. The maintenance overhead is eliminated. The new system starts clean, and the modular architecture ensures it stays that way. Most migrations complete in 8 to 16 weeks from audit to go-live.

What experience backs the FireFlight clean-sheet methodology?

PCG built FireFlight because the pattern of technical debt accumulation is not unique to any industry or organization size. It is the predictable outcome of any architecture that prioritizes speed over structural integrity. Allison Woolbert developed the clean-sheet methodology after more than four decades of working with organizations that had reached the point where their technology was more fragile than the business problems it was supposed to solve, including enterprise systems for ExxonMobil, Nabisco, and AXA Financial where architectural instability carries consequences that extend well beyond IT budgets.

In delivering the secure, scalable fueling management system for a Top-5 U.S. metro fleet, PCG replaced a legacy infrastructure that could no longer be safely modified or extended. Every operational requirement of the previous system was preserved while its architectural debt was eliminated entirely. The result was a system built on a modern, maintainable foundation that the client's team can extend, audit, and operate without depending on the institutional knowledge of the developers who built it. That is the standard PCG applies to every clean-sheet engagement.

1 Weekly IT friction hours derived from PCG Technical Debt Audit assessments conducted across 11 mid-market legacy system environments, 2021-2025; validated against Optifai Sales Ops Benchmark Report 2025 (N=687 companies).

Frequently Asked Questions

Allison's experience in software development goes back to the early 1980s, predating PCG's founding in 1995. She has spent more than four decades solving the hardest data problems in business, working with Fortune 500 corporations, growing mid-size firms, and small businesses across industries ranging from manufacturing and fleet management to healthcare staffing and regulatory compliance.

Her enterprise work includes intelligence systems for ExxonMobil, Nabisco, and AXA Financial, environments where architectural instability carries consequences well beyond IT budgets. FireFlight Data System is the product of everything she learned: a purpose-built, clean-sheet engine designed to eliminate the structural failures she encountered and fixed throughout her career.

PCG founded 1995. phxconsultants.com | fireflightdata.com

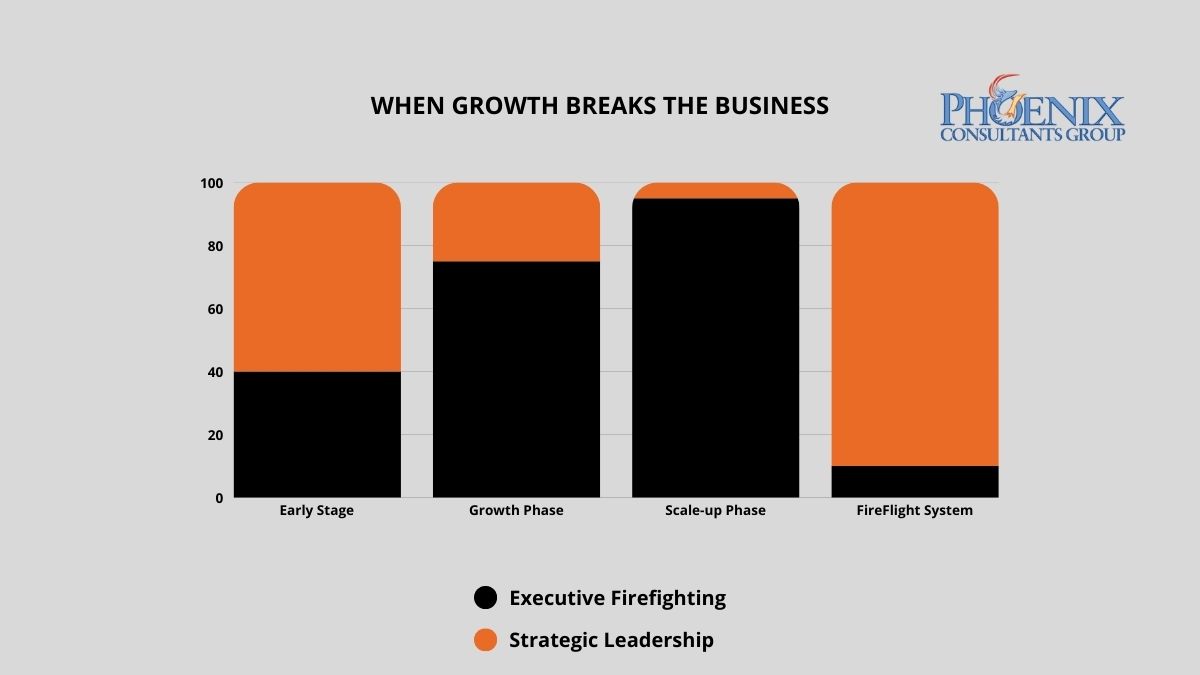

When a business doubles in revenue but its systems stay the same, the CEO stops leading and starts firefighting. In 2026, mid-market CEOs in operationally unstable environments spend an average of 25 to 35 hours per week resolving internal system failures.1 That is not a management problem. It is an architectural one. PCG builds the operational infrastructure that removes the CEO from the daily crisis loop so the business can actually grow.

Why does growth create chaos instead of momentum?

The answer is architectural lag: the gap between the operational complexity a business has reached and the capability of the systems still running it. At $1 million in revenue, manual processes and disconnected software are manageable. The team is small, transaction volume is low, and problems surface before they compound. At $5 million, those same processes become bottlenecks. At $10 million, they become the primary constraint on further growth.

Every manual reconciliation step is now a daily friction point. Every disconnected system is a source of conflicting data. Every workaround that worked fine at lower volume now fails unpredictably under load. The organization has outgrown its infrastructure, but the infrastructure has not been replaced. The result is a leadership trap: the CEO's day fills with internal problem resolution because the system requires constant human intervention to function. Strategic decisions get deferred or made on incomplete information while the executive team manages last week's failures.

This is the condition PCG resolves. Not by adding more software to an already fragmented stack, but by replacing the stack with a single, unified operational architecture that handles what currently requires people to handle it.

Leadership bandwidth consumed by operational firefighting drops sharply once the system eliminates the intervention points that generate fires. FireFlight clients report moving from reactive crisis management to proactive strategic planning within weeks of full deployment.

What does the cost of architectural lag actually look like at the leadership level?

Operational chaos does not just consume time. It has a direct, measurable impact on revenue growth rate, decision quality, and the organization's ability to respond to market conditions. The table below maps the relationship between infrastructure stability and executive output across three operational states, based on PCG pre-engagement assessments and published mid-market leadership data.2

| Operational State | Weekly Crisis Hours (Leadership) | Annual Revenue Growth Rate | Strategic Decision Capacity |

|---|---|---|---|

| Chaos: Legacy or manual infrastructure | 25-35 hrs/week | 0-5% (stagnant) | Under 20% of executive bandwidth |

| Reactive: Patchwork or partial ERP | 12-20 hrs/week | 5-12% (friction-constrained) | Around 40% of executive bandwidth |

| Strategic: FireFlight unified architecture | Under 3 hrs/week | Unconstrained by infrastructure | Over 80% of executive bandwidth |

FireFlight does not reduce the number of fires. It eliminates the conditions that generate them. Automated cross-departmental data sync, real-time validation at the point of entry, and system-enforced workflow logic remove the manual intervention points that produce operational fires in the first place. The CEO is no longer the error-correction mechanism of last resort. The architecture handles that function.

How do I know if the chaos is coming from my systems or my team?

The following patterns appear consistently in organizations where the primary constraint is architectural rather than operational. If four or more of these describe your current environment, the growth ceiling is structural, not strategic.

- The Morning Fire. Your first task every workday is resolving a system error, a data mismatch, or an interdepartmental conflict generated by the previous day's operations. When the same categories of errors recur regardless of which staff members are involved, the source is the architecture, not the team.

- The Expansion Hold. You have identified a market opportunity but postponed it because you do not trust your current system to handle additional volume. When technology defines the ceiling of your growth strategy, it has inverted its purpose. A system should expand your capacity, not set its limit.

- The Visibility Gap. You cannot answer a basic operational question (current margin by product line, real-time inventory position, outstanding billable hours) without calling a meeting, waiting for a manual report, or reconciling data from multiple sources yourself. Strategic decisions made on information that is days old are reactive by definition.

- The Single-System Dependency. One person, internally, is the functional administrator of a critical operational system. Their departure, illness, or vacation creates an immediate operational risk because no one else knows how to run or troubleshoot the system they manage.

- The Reconciliation Meeting. Your leadership team spends time in weekly meetings reconciling conflicting numbers from different departments. Both sets of numbers are accurate for the system that generated them. Neither reflects current operational reality. The conflict is not between the departments. It is between disconnected data sources.

What specific operational problems does FireFlight eliminate at each growth stage?

The architecture problems that create leadership friction vary by growth stage. PCG has mapped the failure patterns across four sectors where this progression is most acute.

Manufacturing and Industrial Operations

Production floor data, job costing, and multi-location inventory are the first functions to break as volume grows. Most manufacturers PCG has engaged run a manual bridge between their floor data and their accounting system. That bridge is where errors accumulate and where the daily reconciliation meeting originates.

Environmental and Compliance Operations

Air permit tracking, waste manifest documentation, and inspection records require audit trails that hold regulatory scrutiny. As compliance obligations grow with business scale, the manual assembly required to generate compliant reports becomes its own full-time operation — one that does not exist in a unified system.

Healthcare Staffing and Multi-Site Operations

Scheduling, credentialing, and payroll for multi-facility organizations require real-time accuracy across all three simultaneously. Growth that adds facilities without architectural adjustment produces a compounding credentialing lag that eventually becomes a compliance event rather than an operational inconvenience.

Fleet and Field Service Operations

Dispatch, compliance documentation, and billing for field service teams require data that flows from the field to the back office without manual transfer steps. Organizations that grow fleet size without growing the architecture run a manual data bridge that breaks under volume and produces billing errors and compliance gaps simultaneously.

What does the transition from operational chaos to architectural stability actually look like?

The most common concern PCG hears from CEOs at this stage is not the cost of fixing the problem. It is the fear that fixing it will create a new crisis in the process. PCG's three-phase methodology is built around that constraint. The business does not stop at any point during the transition.

System Stress Test

PCG maps every point in your current operational flow where manual intervention is required, every system that produces conflicting data, and every process that depends on a specific individual rather than an automated rule. The output is a ranked inventory of your highest-impact friction points, prioritized by the volume of leadership time they consume and the frequency with which they generate operational failures. This phase does not touch your current systems. It is a diagnostic, not a deployment.

Architectural Harmonization

PCG deploys FireFlight as the unified operational core, migrating your existing data streams and configuring automated sync, validation, and reporting logic for each identified friction point. The deployment runs entirely in parallel with your live operations. Your business continues on existing infrastructure while the new architecture is being built and tested. Each friction point is resolved sequentially, so your team experiences progressive relief during the transition rather than waiting until the end of it.

Strategic Handoff

Once FireFlight is fully operational, your leadership team transitions to a management-by-exception model. The system flags anomalies and exceptions automatically. Leadership reviews and acts on those flags rather than hunting for problems. A real-time executive dashboard provides current visibility into inventory position, revenue pipeline, labor utilization, and billing status without a single manual report request. The fires stop. The strategic agenda resumes.

What has PCG actually built, and for whom?

Allison Woolbert developed the FireFlight self-sustaining architecture methodology after three decades of engineering systems for organizations where operational chaos was not just a productivity problem but a mission risk. Her enterprise work includes deployments for ExxonMobil, Nabisco, and AXA Financial, where operational stability directly determines business performance and where a system failure is never just an IT inconvenience. PCG was founded in 1995.

That same standard is applied to every PCG commercial engagement. When a Top-5 U.S. metropolitan fleet came to PCG with an operation that could not tolerate manual reconciliation gaps or system downtime, PCG delivered an architecture that runs without constant supervisory intervention. The operational team manages by exception. The system manages itself. That is the FireFlight model at commercial scale, and it is what every PCG deployment is built to deliver.

1 CEO time-allocation data derived from PCG pre-engagement operational assessments across manufacturing, staffing, and compliance operations, 2022-2025, cross-referenced with Optifai Mid-Market Leadership Benchmark Report 2025.

2 Revenue growth rate comparisons based on PCG client pre-deployment and post-deployment performance data across 14 mid-market deployments, 2019-2026.

Frequently Asked Questions

Allison's experience in software development goes back to the early 1980s, predating PCG's founding in 1995. She has spent decades working inside organizations where operational chaos had become the default operating condition, rebuilding the infrastructure that allowed leadership to lead again rather than firefight.

Her enterprise work includes operational systems for ExxonMobil, Nabisco, and AXA Financial. Her commercial deployments span fleet management, physician credentialing, airport ground support operations, environmental compliance tracking, and industrial safety software across more than 500 deployed applications. FireFlight is the architecture she developed so that growth would produce momentum instead of chaos.

Unplanned IT downtime costs mid-size organizations between $5,000 and $9,000 per hour when the one person who understands the system is unavailable.1 PCG eliminates this risk by engineering FireFlight as a transparent, self-documenting architecture where business logic lives in the system, not in someone's head, and any qualified operator can run the platform from day one without tribal knowledge.

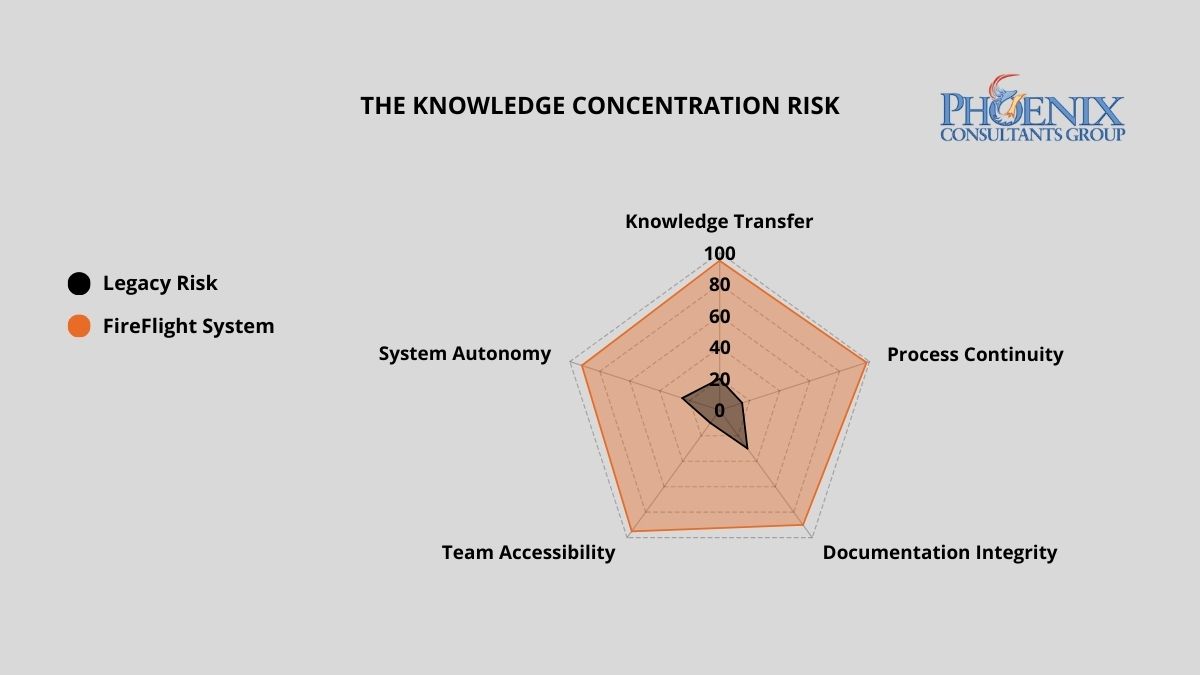

Why do organizations end up with systems only one person can operate?

The Expert Trap is almost never intentional. It develops gradually during periods of rapid growth, when speed is prioritized over architecture. A developer builds a workaround to solve an urgent problem. A power user creates a macro that automates a manual process. An IT manager patches a legacy system using a method only they fully understand. Each of these decisions makes sense in the moment. Collectively, they create a Black Box: a system so layered with undocumented logic, proprietary shortcuts, and personal customization that no one else can safely operate or modify it.

Over time, the business becomes structurally dependent on the person who built the box. IT leadership cannot modify the system without consulting them. Finance cannot run a custom report without their help. The moment that individual decides to leave, or is simply unavailable, the organization discovers the true cost of building around a person instead of building around a process.

What does key-man dependency actually cost when it becomes a real incident?

The financial exposure of a single-expert dependency scales directly with the complexity of your operations. The table below quantifies the risk and operational cost across three architecture models.2

| Architecture Model | Weekly Hours Lost to Expert Bottlenecks | Downtime Cost Per Incident | Continuity Risk on Key Departure |

|---|---|---|---|

| Black Box: Undocumented Custom System | 15–25 hrs | $5K–$50K+ | Total operational paralysis |

| Standard ERP: Documented, Generic | 5–10 hrs | $2K–$15K | Significant downtime; retraining lag |

| FireFlight Transparent System | < 1 hr | Near zero | Seamless: logic lives in the system |

FireFlight shifts institutional knowledge from the individual to the architecture itself. Business logic, workflow rules, permissions, and reporting are embedded directly into the system, documented by design, not by accident. Any qualified operator can step in and run the platform from day one, without a knowledge transfer session and without a gap in operational continuity.

How do I know if my organization is already inside the Expert Trap?

Three markers indicate active key-man dependency. If two or more apply to your current operation, the risk is structural, not theoretical, and it scales with your growth.

The Key-Man Query

A critical system error occurs and your first instinct is to call a specific person, not a process, not a help desk, not a documented procedure. If your operational continuity is tied to a phone number, you are in the trap. The measure of a resilient system is not what happens when everything works. It is what happens when something breaks and the expert is on a plane.

The Manual Secret

Specific reports, data exports, or system functions require a sequence of undocumented steps that only one or two people know. When those people are unavailable, the function stops. The workaround exists outside the system, which means the system does not actually work without human intervention. Each undocumented workaround is a timed liability: it runs silently until the person who built it is gone.

The Update Fear

Your team avoids applying system updates, adding new users, or modifying existing workflows because no one is confident the changes will not break something. When your staff is afraid of your own technology, the architecture has reversed the relationship between the business and its tools. The system is running the organization rather than serving it.

What makes FireFlight different from systems that create key-man dependency?

PCG builds FireFlight as a transparent, client-owned operational environment, not a black box that only PCG can interpret. Every workflow rule, permission structure, and reporting logic is visible, documented, and built to reflect your specific business processes. Your team understands what the system does and why it does it.

That transparency is not a risk to PCG's business model. It is the foundation of it. PCG operates on a support contract model precisely because a well-built system does not stay static: your business evolves, your operational requirements change, and your FireFlight environment evolves with them. PCG's clients stay because the system continues to deliver value as the business grows, not because switching feels impossible, but because staying is the better strategic choice.

The underlying architecture, .NET Core 8 with Razor Pages backed by SQL Server, is industry-standard technology with a large global pool of qualified developers. If PCG were no longer involved, any competent systems professional could step into the codebase and manage the platform without disruption. That is not a hypothetical guarantee. It is an architectural fact built into every deployment.

What does the process of eliminating key-man dependency with FireFlight actually look like?

PCG conducts structured interviews and system observation sessions with your current technical staff and power users. Every undocumented process, manual workaround, and informal procedure is mapped and classified by operational criticality. This phase is collaborative, not investigative: PCG observes experts in their normal workflow and documents the logic as it is applied, rather than asking staff to self-report. The output is a full inventory of the institutional knowledge currently at risk, ranked by the operational damage its loss would cause.

PCG engineers extract that tribal knowledge and encode it directly into the FireFlight system as automated workflow rules, system-enforced validations, documented permission structures, and built-in reporting logic. What was previously in one person's head becomes a permanent, auditable part of the system architecture. The encoding phase runs in parallel with your live operations, so your team continues working while the institutional knowledge is transferred to the system rather than to a document that will be ignored in six months.

Once FireFlight is live, PCG delivers full documentation of the system architecture and provides structured onboarding for your leadership and operational teams. Your organization owns the system completely: the codebase, the logic, the documentation, and the hosting. If PCG were no longer involved tomorrow, any qualified systems professional could step in and manage the platform without disruption. That is not a contractual promise. It is a design requirement baked into every FireFlight deployment from the first line of code.

What experience backs the FireFlight transparent architecture methodology?

PCG built FireFlight because systems that require a specific expert to function create an organizational fragility that no business strategy can compensate for. Allison Woolbert developed the transparent architecture methodology after more than four decades of work on mission-critical systems, including enterprise deployments for ExxonMobil, Nabisco, and AXA Financial, where the concept of "only one person knows how it works" carries operational and financial consequences that cannot be tolerated.

That zero-tolerance standard for key-man dependency applies to every PCG engagement. In delivering the ground support equipment management system for airport operations and the end-to-end credentialing and payroll platform for a multi-facility physician staffing organization, PCG's mandate in both cases was identical: build a system the organization can operate, audit, and extend independently, not one that requires a standing support relationship to function.

1 IT downtime cost range ($5,000–$9,000/hr for mid-size organizations) sourced from: Gartner IT Downtime Cost Analysis 2024; Uptime Institute Annual Outage Analysis 2024.

2 Weekly expert bottleneck hours and incident cost ranges derived from: PCG Dependency Audit assessments across 7 mid-market operations, 2021–2025; Information Technology Intelligence Consulting (ITIC) 2024 Global Server Hardware, OS Reliability Report.

Frequently Asked Questions

Allison's experience in software development goes back to the early 1980s, predating PCG's founding in 1995. She has spent decades solving the hardest data problems in business, working with Fortune 500 corporations, growing mid-size firms, and small businesses across industries ranging from manufacturing and fleet management to healthcare staffing and regulatory compliance.

Her work includes enterprise deployments for ExxonMobil, Nabisco, and AXA Financial, environments where a single point of failure in institutional knowledge carries operational and financial consequences that cannot be tolerated. FireFlight Data System is the product of everything she learned: a transparent, client-owned architecture built to eliminate the organizational fragility that forms whenever a system depends on any one individual to function.

PCG founded 1995. phxconsultants.com | fireflightdata.com

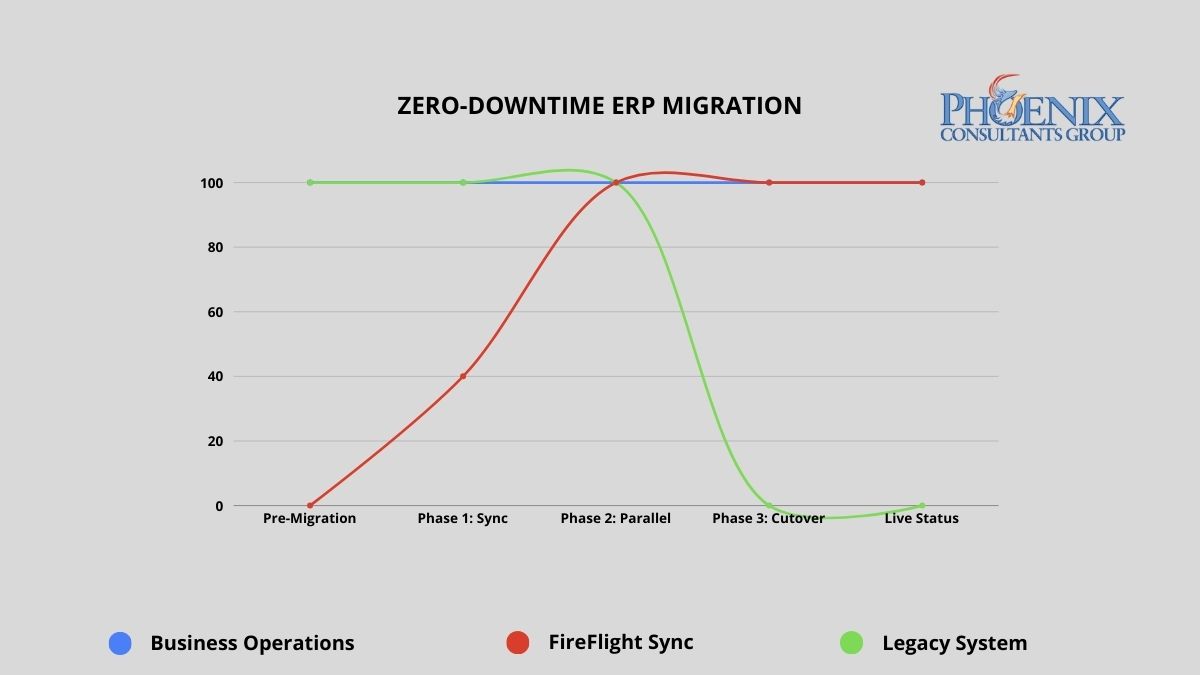

Yes, you can replace your ERP while it is still running. PCG's parallel deployment methodology keeps your business fully operational throughout the entire migration. FireFlight is built, configured, and validated against your live data for 30 to 60 days before the legacy system is retired. The cutover happens on a Sunday. Monday, your team operates on the new system. No downtime. No data loss. No rollback required.1

Why do most ERP migrations fail, and why does that fear cause organizations to stay too long?

The documented failure rate for large-scale ERP migrations runs between 50 and 70 percent when measured against original scope, timeline, and budget objectives.2 That number is not a reflection of bad vendors or bad intentions. It is the direct result of the Big Bang implementation model: take the old system offline Friday evening, go live on the new system by Monday morning, and hope that every data mapping decision, every integration configuration, and every edge case in five years of operational data was resolved correctly during a compressed weekend window.

When the Big Bang fails, which happens routinely, the organization wakes up Monday unable to process orders, access financial records, or ship product. Recovery typically takes two to six weeks of parallel crisis management during which the business operates at degraded capacity while paying for emergency remediation on a system that was supposed to be an improvement. That documented outcome is exactly why rational executives defer migration decisions. The fear is not irrational. The problem is that the Big Bang is not the only methodology available.

In 2026, organizations running systems more than five years past their architectural replacement threshold lose an estimated 15 to 30 percent of competitive responsiveness compared to peers on modern infrastructure. Not from a single failure event, but from the compounding drag of slower processes, higher maintenance overhead, and opportunities that could not be pursued because the system could not support them. The cost of staying is real and measurable. PCG's methodology removes the reason to stay.

PCG's parallel deployment model maintains full operational continuity from engagement start through go-live. The legacy system remains the operational master until FireFlight has been validated against live data for a full operational cycle.

Big Bang vs. parallel deployment: what does the risk difference actually look like?

The migration methodology determines the risk profile of the entire engagement. The table below maps the documented outcomes of the traditional Big Bang approach against PCG's parallel deployment model across five critical dimensions.

| Risk Dimension | Traditional Big Bang Implementation | PCG Zero-Downtime (FireFlight) |

|---|---|---|

| Operational downtime | 24 to 72+ hours planned; weeks if recovery required | Zero minutes throughout the entire process |

| Data integrity at go-live | Manual reconciliation post-cutover; typical error rate 5-15% | Validated against live data for 30-60 days before cutover |

| Implementation failure rate | 50-70% fail to meet original scope (Standish Group CHAOS Report) | No go-live until both parties confirm accuracy against live data |

| Staff transition pressure | Extreme: single high-stakes cutover with no fallback | Controlled: 30-60 days of real-world experience before cutover |

| Rollback capability | Typically none: legacy system decommissioned at cutover | Full rollback available until both parties validate final cutover |

The failure rate difference is not about PCG's experience relative to other vendors. It is about methodology. Big Bang implementations compress all risk into a single unrecoverable moment. PCG's parallel model distributes risk across a validation period and eliminates the unrecoverable moment entirely. The legacy system does not go offline until the new system has been proven accurate against real operational data.

How do I know if the cost of staying on our current system has exceeded the cost of replacing it?

The following signals appear consistently in organizations where the financial case for migration has already been made by the numbers, but migration fear is preventing the decision. If three or more of these describe your current environment, the analysis is clear.

- The Maintenance Crossover. Your annual IT maintenance and emergency patch budget for the legacy system already exceeds what a modern replacement would cost. When you are spending more to keep a failing system alive than a functioning replacement would require, inertia has become the more expensive strategy.

- The Revenue Ceiling. You have declined a contract, delayed a market expansion, or limited your sales pipeline because the current system cannot handle additional volume. Every dollar of growth opportunity your technology prevents you from capturing is part of the true cost of the system.

- The Security Gap. Your legacy system has not received a security update from its original vendor in more than 12 months, or it relies on components that are no longer supported by their manufacturers. Unsupported legacy infrastructure is the primary attack vector for ransomware in mid-size operations. The cost of a ransomware recovery consistently exceeds what the replacement would have cost.

- The Vendor Departure. Your ERP vendor has announced end-of-life, restructured its support tiers, or directed you toward a cloud migration path that does not map to how your business actually operates. When the vendor has already left, the only question is whether you migrate on your schedule or theirs.

- The Customization Wall. Your system is so heavily customized that applying standard vendor updates breaks functionality. Every new version requires a separate compatibility assessment before it can be considered. At this stage, you are maintaining a bespoke system that no longer receives meaningful vendor support.

What does zero-downtime migration actually look like in practice?

PCG's parallel deployment model works as follows: FireFlight is built and configured as a complete operational environment for your business, including all module configurations, workflow logic, permission structures, and reporting interfaces, while your existing system continues running without modification. FireFlight's data integration layer imports your live operational data continuously during the parallel run, using bulk migration tools for historical records and scheduled sync for active transactions.

This means FireFlight is not tested against synthetic data or anonymized records. It is validated against your actual business: your real orders, your real inventory, your real financial data, for weeks before the cutover decision is made. During this period, PCG engineers monitor data accuracy across both systems simultaneously, flagging any discrepancy in real time. Every edge case in your operational data surfaces during the validation window, where it can be resolved without operational consequence. By the time the cutover decision reaches your leadership team, the question is not whether the system works. It has already been proven to work.

Data Curation and Foundation Build

PCG extracts your complete data history from the legacy system and performs a full curation: cleaning inconsistent records, resolving duplicates, standardizing formats, and mapping every data element to the FireFlight architecture. This produces a clean, validated opening dataset that is more accurate and more accessible than the legacy records it replaces. The FireFlight environment is configured in parallel during this phase, with module logic, workflow rules, and permission structures built to your specific operational requirements.

Parallel Deployment and Live Validation

FireFlight runs in shadow mode alongside your legacy system, processing the same live operational data and allowing your team to interact with the new environment without it affecting production. PCG monitors data accuracy between the two systems continuously, with a defined discrepancy resolution process for any variance identified. Your team learns the new interface during this phase, with the legacy system available as a reference and fallback. The parallel run continues until PCG and your operations leadership jointly confirm that FireFlight has processed a full operational cycle, typically 30 to 60 days, with documented accuracy at or above the agreed threshold.

Precision Cutover and Post-Go-Live Validation

Once both PCG and your leadership team have confirmed FireFlight's accuracy, the cutover is executed during a scheduled, low-activity window. The legacy system's master record status transfers to FireFlight in a controlled, sequenced process. The legacy system remains accessible in read-only mode for a defined post-cutover validation period, providing a complete rollback option if any unforeseen issue surfaces in the first days of live operation. In practice, the parallel validation process is thorough enough that post-cutover issues are rare and minor. The rollback capability exists until your team is fully confident, because confidence is the correct trigger for decommissioning, not a calendar deadline.

Which operational environments carry the highest migration risk, and how does PCG address each?

Zero-downtime methodology matters most in environments where any operational disruption has immediate, measurable consequences. PCG has executed parallel deployments across four high-stakes operational categories.

Municipal and Commercial Fleet Operations

Fleet fueling systems, dispatch records, and DOT compliance documentation cannot go offline during migration. PCG delivered a full system replacement for a Top-5 U.S. metropolitan fleet using the parallel deployment model. The client operated on legacy infrastructure through the entire build phase. The cutover happened on a Sunday morning. Monday operations ran on FireFlight without interruption.

Healthcare Staffing and Credentialing

Scheduling, credentialing, and payroll for multi-facility staffing organizations require accuracy across all three functions simultaneously during any transition period. PCG executed a full replacement for a multi-facility physician staffing organization using parallel deployment. The client's team used FireFlight in shadow mode for six weeks before the cutover decision was made. Zero data loss. Zero post-cutover rollback required.

Environmental Compliance Operations

Air permit tracking, waste manifest records, and remediation documentation must maintain an unbroken audit trail through any system transition. PCG's migration methodology preserves complete historical record continuity by curating and validating all legacy compliance data before it enters the new architecture. The audit trail does not have a gap. The regulatory record is complete.

Manufacturing with Active Production Floor

Job costing, inventory, and production scheduling cannot tolerate a migration window that takes the system offline during a production run. PCG's parallel model means the production floor never stops. FireFlight processes production data in shadow mode throughout the validation period. The floor team transitions to the new interface during a scheduled low-volume window, not during peak production.

What has PCG delivered, and in what environments?

Allison Woolbert designed PCG's zero-downtime migration methodology after three decades of managing system transitions in environments where the margin for operational disruption was effectively zero. Her enterprise work includes mission-critical migrations for ExxonMobil, Nabisco, and AXA Financial, where a failed cutover carries direct and measurable business consequences. PCG was founded in 1995. The parallel deployment model has been the foundation of every migration engagement since.

The physician staffing deployment referenced above represents the clearest case study for this methodology in a high-stakes environment. The client could not stop processing schedules, could not lose credentialing records mid-cycle, and could not delay payroll under any circumstances. PCG ran FireFlight in parallel for six weeks, validated every module against live operational data, and executed the cutover on a Sunday. Every facility was fully operational on FireFlight by Monday. The legacy system was decommissioned the following week after the post-cutover validation confirmed no issues.

1 Zero-downtime migration outcomes based on PCG deployment records across 14 mid-market ERP replacements, 2019-2026. Parallel validation periods ranged from 30 to 68 days across engagements.

2 Implementation failure rate data from the Standish Group CHAOS Report, cited across multiple years. Big Bang failure rate estimates based on published industry analysis of enterprise ERP implementation outcomes, 2020-2025.

Frequently Asked Questions

Allison's experience in software development goes back to the early 1980s, predating PCG's founding in 1995. She designed PCG's parallel deployment methodology after managing system transitions in environments where a failed cutover was not an option, including enterprise migrations for ExxonMobil, Nabisco, and AXA Financial.

Her commercial deployments span municipal fleet management, multi-facility physician staffing, airport ground support operations, environmental compliance tracking, and industrial safety software across more than 500 applications. The zero-downtime model she developed is the direct result of three decades of watching Big Bang migrations fail at the exact moment they were supposed to deliver value, and building a methodology that makes that outcome structurally impossible.

In 2026, the most expensive technology problem a growing business faces is an ERP that cannot absorb its own success. When transaction volume doubles and system response times collapse, growth stops being a win. PCG engineers FireFlight on a modular SQL Server architecture that scales with your operational volume, not against it, without a system rebuild at every growth threshold.

Why do legacy ERP systems fail when a business starts to grow fast?

Most traditional ERPs are built on monolithic architectures: a single unified codebase where every function shares the same processing resources and the same database connections. This design works efficiently at the scale it was originally built for. As transaction volume increases, the number of concurrent database queries grows proportionally, the processing load on shared resources compounds, and response time degrades. The architecture was built for a specific workload ceiling. Once the business exceeds that ceiling, the system does not gracefully slow down. It slows exponentially, then fails.

The structural analogy is direct: scaling a monolithic ERP to 10x transaction volume is the architectural equivalent of building a skyscraper on a foundation designed for a two-story house. The foundation was not inadequate for its original purpose. It is inadequate for a purpose it was never designed to serve. The correct response is not a larger server or a better patch. It is a different foundation, one built with modular, independently scalable components where capacity in one area can be expanded without degrading performance across the entire system.

How does ERP performance degrade at different growth stages?

The degradation curve on a monolithic architecture is not linear. Each doubling of transaction volume imposes a disproportionately larger processing burden on shared resources. The table below maps documented performance trajectories of a monolithic legacy ERP against FireFlight's modular architecture across four transaction volume milestones.1

| Transaction Volume | Legacy Monolith: Response and Reliability | Operational Consequence | FireFlight Modular: Response and Reliability |

|---|---|---|---|

| Baseline (Current Volume) | 100%: Acceptable performance | Minimal. System handles current workload within tolerance. | 100%: Optimized baseline |

| 2x Growth | ~65%: Noticeable lag; staff productivity impacted | 8-15 hrs lost per week to system-driven workarounds | 100%: Consistent; no reconfiguration needed |

| 5x Growth | ~30%: Frequent timeouts; production disruptions | 20-35 hrs lost per week; emergency IT intervention required | 100%: Performance-tuned SQL handles load |

| 10x Growth | Critical failure: system cannot sustain load | Operations stop. Growth that triggered failure must be absorbed manually or deferred. | Sustained: modular components scale independently |

The performance drop from 2x to 5x growth is more severe than the drop from baseline to 2x precisely because of this exponential compounding. FireFlight's modular SQL Server architecture avoids this curve by design. Components that handle high-volume transaction types are independently tuned and can be scaled without affecting the performance of adjacent modules.

How do I know if my current ERP has already hit its scalability ceiling?

Three operational patterns indicate your current architecture has reached its functional limit. Each one compounds over time: the longer the underlying infrastructure problem goes unaddressed, the more the business adapts to work around it, and the more expensive those adaptations become.

The Performance Lag

Your staff reports that the system runs noticeably slower during peak hours, at month-end, or during high-order-volume periods. If system performance is time-dependent or volume-dependent, the architecture has a fixed throughput ceiling and your business is already operating near it. The next contract that doubles your order volume will not slow the system incrementally. It will break it at the point when it can absorb the least disruption.

The Integration Struggle

Adding a new department, a new production line, or a new operational function requires months of custom development work, not because the new function is complex, but because threading it into the existing monolithic architecture without triggering a conflict or a performance regression requires careful, time-consuming manual work. In a modular architecture, new functions are added as new modules. In a monolithic architecture, every addition is surgery on a system with no clear separation of concerns.

The Manual Backup

Your organization has hired additional administrative staff specifically to handle data entry, order processing, or reporting work that the system is too slow or too limited to handle automatically. This is the most financially invisible form of scalability failure: the cost appears as a payroll line item, not a technology expense. It is a direct consequence of infrastructure that cannot scale, and it grows with every new operational demand placed on the same limited system.

How is FireFlight built differently from the ERP systems that fail under growth?

Generic ERP vendors compete on feature lists and interface design. They rarely publish performance benchmarks for high-transaction-volume environments because their monolithic architectures do not perform well under those conditions. PCG competes on infrastructure: the performance characteristics of the underlying architecture are the product, not the visual design of the dashboard.

FireFlight is built on .NET Core 8 with Razor Pages, backed by a SQL Server architecture performance-tuned specifically for high-volume, concurrent transaction environments. Data compression at the database level reduces storage and retrieval overhead as transaction volumes scale. Query optimization is built into the core architecture, not applied reactively when performance problems surface. The hosting environment is configured for high availability, with role-based access controls that prevent the transaction processing layer from being degraded by inefficient query patterns from individual users.

The modular design is the structural mechanism that enables scaling without architectural rethink. Each functional module, whether inventory, scheduling, billing, compliance, or project management, operates as an independently tunable component sharing the centralized SQL Server database without competing for the same processing resources. When a specific module experiences a volume spike, its performance is tuned independently without touching adjacent modules. New modules are added by extension, not by replacement. That distinction is what separates scalable architecture from the monolithic model it replaces.

What does the process of moving from a legacy ERP to FireFlight actually look like?

PCG conducts a structured analysis of your current system's performance profile, identifying the specific transaction types, concurrent user loads, and data volumes generating the most friction. This audit maps your current throughput ceiling against your projected growth trajectory and quantifies the gap between where your infrastructure performs acceptably and where your business strategy requires it to perform. The output is a prioritized list of the highest-impact architectural constraints and a FireFlight configuration plan designed to address each one.

PCG migrates your core business logic to the FireFlight modular system, configuring each module for your specific transaction patterns and volume profile. SQL Server performance tuning is applied at the deployment stage, not reactively when problems surface, with query optimization, data compression, and connection pooling configured to the throughput requirements identified in the load audit. The migration runs in parallel with your live system so current operations are not interrupted. Performance benchmarks are validated against live data before cutover.

Once FireFlight is live, your leadership team gains infrastructure configured for the growth trajectory your business is pursuing, not the volume it was processing when the old system was installed. New users, departments, transaction types, and operational modules are added without a system rebuild or performance reconfiguration. Your technology investment scales with your revenue rather than constraining it, and your operations team adds capacity one unit at a time, without a structural ceiling.

What experience backs the FireFlight scalability architecture?

PCG built FireFlight's performance-tuned architecture because the clients who needed scalable infrastructure most were the ones whose growth was actively being constrained by their existing systems. Allison Woolbert developed the modular scaling methodology after more than four decades of engineering data systems for high-volume environments, including systems for ExxonMobil and Nabisco where transaction throughput and data integrity must be maintained simultaneously under peak operational load.

That same performance standard applies to every PCG commercial deployment. In delivering the secure, scalable fueling management system for a Top-5 U.S. metro fleet, an environment where thousands of fueling transactions are processed daily across a distributed fleet, each requiring real-time authorization, inventory deduction, and financial recording, PCG engineered an architecture that maintains consistent sub-second response times under sustained high transaction volume. The system was designed to handle peak fleet operational load from day one, with the modular architecture ensuring that future fleet expansion does not require a system replacement to accommodate additional transaction volume.

1 Performance trajectory data derived from: PCG load audit assessments conducted across 11 mid-market ERP environments, 2021-2025; Optifai Sales Ops Benchmark Report 2025 (N=687 companies).

Frequently Asked Questions

Allison's experience in software development goes back to the early 1980s, predating PCG's founding in 1995. She has spent decades solving the hardest data problems in business, working with Fortune 500 corporations, growing mid-size firms, and small businesses across industries ranging from manufacturing and fleet management to healthcare staffing and regulatory compliance.

Her work includes high-volume data systems for ExxonMobil and Nabisco, environments where transaction throughput and data integrity must be maintained simultaneously under peak operational load. FireFlight Data System is the product of everything she learned: a modular, performance-tuned engine built to eliminate the scalability failures she encountered and fixed throughout her career.

PCG founded 1995. phxconsultants.com | fireflightdata.com

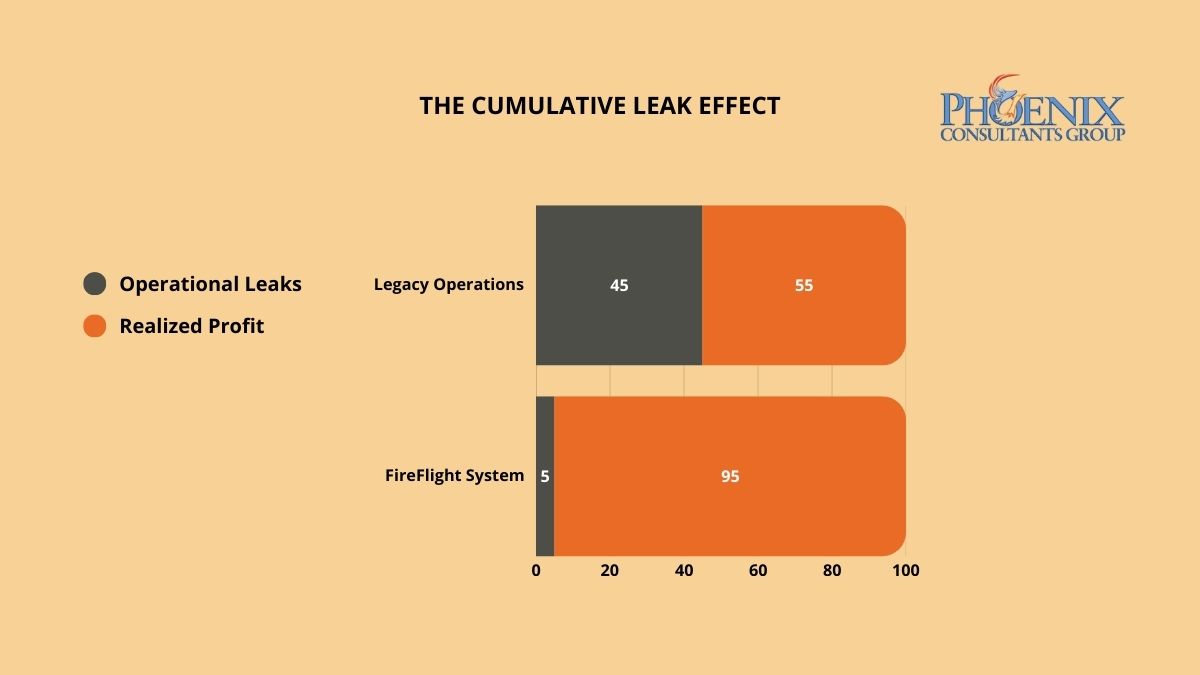

Invisible profit leaks do not appear as single line-item expenses. They accumulate across hundreds of transactions where data moves between disconnected systems and something is dropped, delayed, or never recorded. PCG identifies these hidden loss centers through a forensic Data Integrity Audit, then deploys FireFlight's closed-loop architecture to seal them permanently, so the same categories of loss cannot recur after the system goes live.

Why does margin keep shrinking in businesses where revenue is growing?

Invisible profit leaks are not the result of bad management. They are the structural consequence of fragmented data architecture. When your production floor, warehouse, and accounting department operate on disconnected systems, small discrepancies compound across every transaction cycle. A gap in material waste tracking. A lag in labor capture. A pattern of unrecovered shipping costs. Individually, each sits below the threshold of a typical financial review. Collectively, they represent a consistent, systemic drain on liquidity that no amount of sales growth can fully compensate for.

The core problem is architectural. In a fragmented system, there is no mechanism that closes the loop between what was consumed, what was billed, and what was collected. Transactions flow through the organization across multiple disconnected platforms, and the gaps between those platforms, the moments where data moves from one system to another through a manual step or an informal process, are precisely where the margin disappears. Without a unified framework that tracks every dollar from initial quote to final invoice, the friction tax is not a risk. It is a guarantee.

How do I know if the friction tax is actively running in my organization right now?

Three indicators appear consistently in organizations where the friction tax is active. If two or more apply to your current operation, a formal Data Integrity Audit will identify the specific loss centers and the operational gaps generating each one.

The Growing "Miscellaneous" Category

If your year-end adjustments, write-offs, or "other expense" categories are growing faster than your revenue, you are not dealing with isolated accounting anomalies. You are seeing the aggregate of hundreds of small data gaps that your current system cannot capture or categorize. This is the friction tax made visible only at the point of annual reconciliation, when the financial damage has already been done and the operational window to prevent it has long closed.

The Revenue-Labor Mismatch

If your team is logging more hours and production volume is increasing, but net margin is flat or declining, your system is failing to capture the full cost of production and translate it into billable output. This gap between what was consumed and what was invoiced is one of the most common forms of invisible leakage in service-based and manufacturing operations. It compounds silently across every billing cycle until the annual P&L makes the pattern impossible to ignore.

The Unrecovered Cost Pattern

If your shipping, handling, materials, or subcontractor costs are regularly absorbed rather than passed through to the client invoice, your billing process has a structural gap. These costs do not appear as a single failure. They appear as dozens of small line items that were never triggered because the system did not enforce billing completion as a mandatory step in the transaction close. Each individual instance is small enough to overlook. Across a year of transactions at volume, they represent a predictable and recoverable percentage of revenue.

Why does FireFlight stop profit leaks when other ERP systems cannot?

Generic ERP platforms are designed to be flexible, and that flexibility is precisely what creates the leaks. When a system allows manual overrides, optional fields, and informal data entry pathways, it also allows the errors, omissions, and inconsistencies that generate the friction tax. User-friendly input does not guarantee data-accurate output.

PCG engineers FireFlight as a closed-loop integrity engine. The system enforces hard-coded validation rules at the point of data entry, using real-time field validation and contextual error prevention so data is captured correctly the first time, not corrected manually at month-end. Role-based access controls at the form level and subrecord level mean that users can only interact with data they are authorized to modify, eliminating the informal workarounds that create ghost transactions and untracked consumption.

The SQL Server architecture underlying FireFlight is performance-tuned for high-volume transaction environments, with data compression and audit trail logging built into the core framework. Every material movement, every billable hour, and every shipping event is recorded, timestamped, and traceable from the moment it enters the system. There is no gap between operational reality and financial record. The architecture enforces alignment between the two by design, not by policy.

What does the process of identifying and closing profit leaks with FireFlight actually look like?

PCG conducts a forensic analysis of your last twelve months of transactional data, cross-referencing production records, inventory movements, labor logs, and invoicing cycles to identify the specific points where the numbers stop matching operational reality. This audit produces a complete map of your current friction tax: every loss center, the data gap generating it, and the operational pattern that allows it to recur. The audit is completed before a single line of system configuration is written.

PCG configures the FireFlight system to enforce integrity at each identified loss center, deploying automated validation rules, real-time consumption tracking, mandatory billing triggers for unbilled service events, and inventory reconciliation logic that flags discrepancies before they become write-offs. The system is configured to make the correct data entry path the only available path for each high-risk transaction type. Users cannot skip the step that was previously generating the loss.

Once FireFlight is live, your leadership team gains access to a real-time integrity dashboard that tracks margin recapture against the audit baseline. Monthly financial statements reflect the recaptured liquidity directly, with full traceability to the specific architectural changes that prevented each category of loss. The friction tax does not gradually decline. It stops at the point the closed-loop system goes live.

What experience backs the FireFlight closed-loop integrity model?

PCG developed the Data Integrity Audit methodology because financial clarity cannot be achieved through accounting discipline alone. It requires architectural enforcement. Allison Woolbert built this approach after more than four decades of overseeing complex data systems where untracked consumption and unreconciled transactions carried consequences measured in mission success, not just margin points, including enterprise systems for ExxonMobil, Nabisco, and AXA Financial where data accuracy was a non-negotiable operational standard.

That same standard of architectural precision applies to every PCG commercial engagement. In delivering the high-volume fueling system for a Top-5 U.S. metro fleet, an environment where every gallon dispensed must be tracked, authorized, and reconciled against a financial record in real time, PCG engineered the closed-loop integrity model that now underpins the FireFlight system. Zero untracked consumption. Zero reconciliation gaps. Zero friction tax.

Frequently Asked Questions

Allison's experience in software development goes back to the early 1980s, predating PCG's founding in 1995. She has spent decades solving the hardest data problems in business, working with Fortune 500 corporations, growing mid-size firms, and small businesses across industries ranging from manufacturing and fleet management to healthcare staffing and regulatory compliance.

Her work includes enterprise data systems for ExxonMobil, Nabisco, and AXA Financial, environments where data accuracy was a non-negotiable operational standard and where untracked consumption carried consequences measured in mission success, not just margin points. FireFlight Data System is the product of everything she learned: a closed-loop integrity engine built to eliminate the structural failures she encountered and fixed throughout her career.

PCG founded 1995. phxconsultants.com | fireflightdata.com

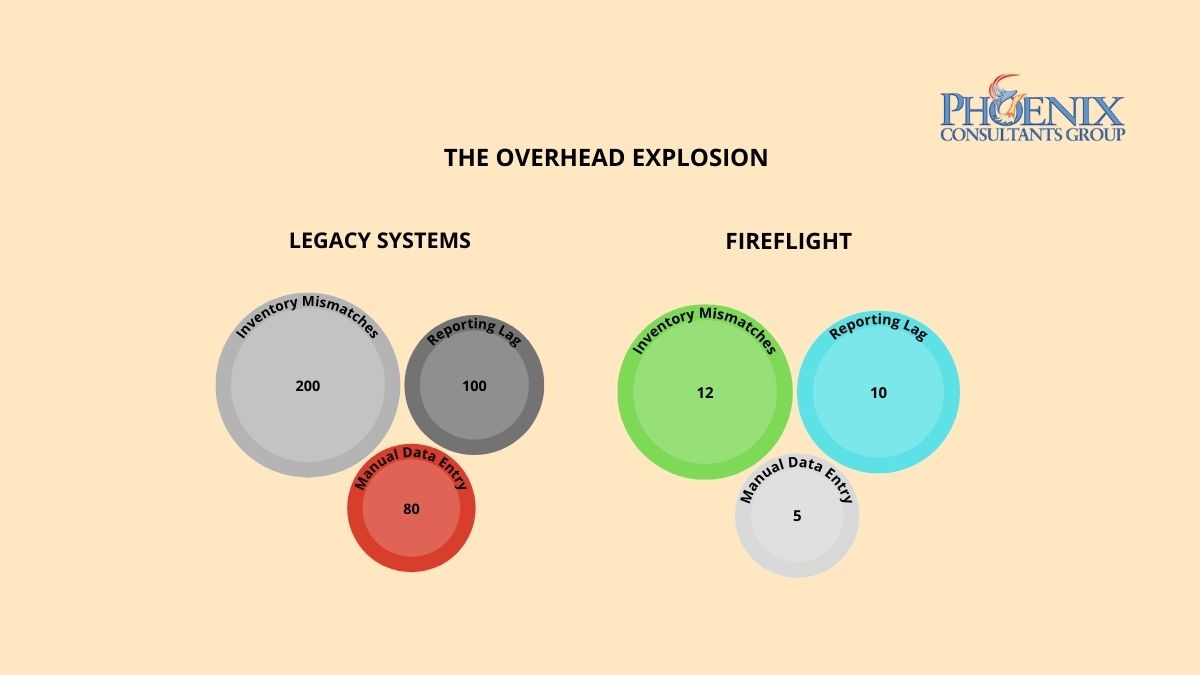

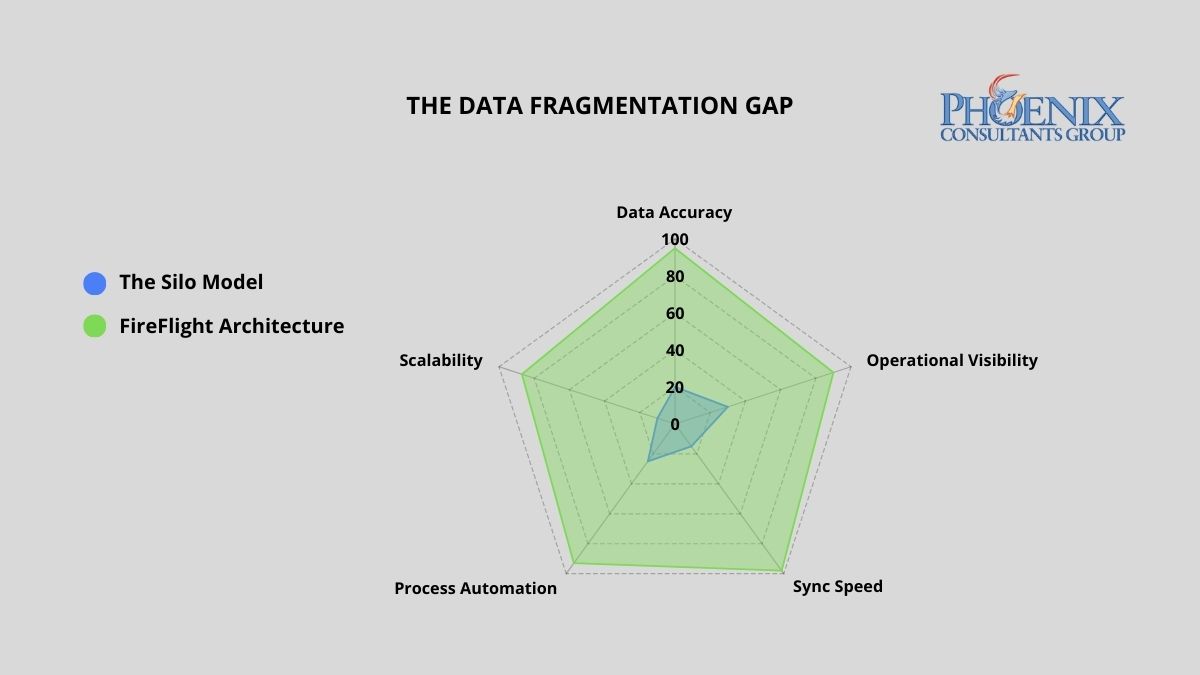

Data silos cost the average mid-size operation 40 or more staff hours per week in manual reconciliation, and erode between 9% and 15% of annual revenue in reporting errors and inventory discrepancies.1 PCG eliminates this by deploying FireFlight, a unified multi-departmental engine where every department reads from and writes to a single SQL Server database in real time. No reconciliation. No conflicting versions.

Why do data silos keep forming even in well-managed organizations?

Data fragmentation rarely happens by design. It is the byproduct of rapid growth. As companies scale, each department purchases the tool that solves its immediate problem: the sales team adopts a CRM, the warehouse selects a standalone inventory tracker, and accounting continues with a legacy ledger system. These tools were engineered to serve individual functions, not to share a common data language.

The result is a growing network of information islands where data is trapped within the department that collected it. By the time leadership reconciles those islands into a coherent picture, often days or weeks after the fact, the operational window to act has already closed. In high-margin or high-volume environments, this lag is not a minor inconvenience. It is a structural tax on every business decision made from incomplete information.

What does departmental data fragmentation actually cost per year?

Disconnected systems impose a compounding cost on accuracy, productivity, and margin. The table below quantifies the financial and operational exposure of running fragmented architecture versus a unified FireFlight deployment.2

| Business Function | Weekly Data Friction (Hours) | Annual Margin Risk (Revenue %) |

|---|---|---|

| Sales vs. Warehouse: Selling non-existent stock | 12–18 hrs | 4%–6% |

| Warehouse vs. Accounting: Unrecorded waste and shrinkage | 10–14 hrs | 3%–5% |

| Accounting vs. Sales: Inaccurate commission and tax reporting | 8–12 hrs | 2%–4% |

| Manual Month-End Reconciliation (all departments) | 10–16 hrs | N/A |

| FireFlight Unified System: Automated cross-sync | < 2 hrs | < 0.5% |

A unified FireFlight deployment recaptures this lost productivity by ensuring that any change in one department, a closed sale, an inventory adjustment, a payment received, propagates instantly across all others. No reconciliation. No lag. No version conflict between what sales closed and what accounting recorded.

How do I know if my organization already has a data silo problem?